That's now bled over even to console gaming, where you're seeing 120Hz become a standard for both televisions and gaming consoles themselves. Which should be entirely unsurprising, considering how much more powerful PC hardware has become in the last decade, and how much of an emphasis there now is on higher framerates and lower input latency when it comes to PC gaming. The reality is that with contemporary hardware, contemporary video drivers, and contemporary operating systems, a properly coded emulator can get its frames to the display in less time than it takes for the display to process the next frame at 60fps. I find it kind of strange that you're having technical discussions about input latency on original hardware when you don't seem to know what kind of latency original hardware has.

Original hardware is entirely incapable of next-frame response, and will always have at least one frame of latency.

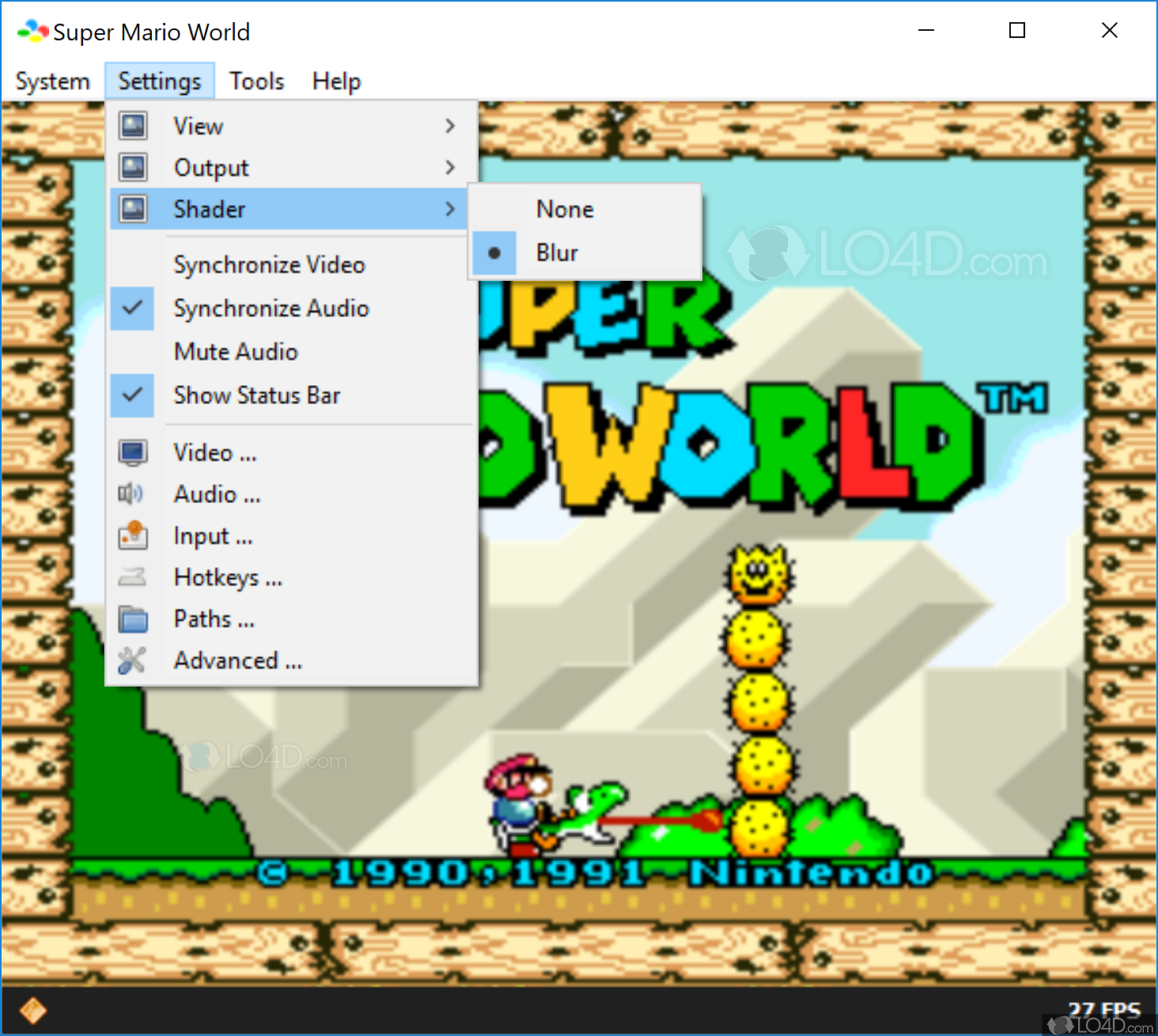

So there is almost guaranteed more lag than original hardwareit's not clear whether you're understanding what's going on that CRT video shows next-frame response, which means there's literally zero latency. And if I were running my own setup on a CRT with a USB controller capable of 1ms response times, then there would be zero additional input latency over original hardware, even with run-ahead latency reduction enabled, at least for this specific core. That's not a matter of speculation, that's just common knowledge. In this case, original hardware performance is two frames of latency-that's what's built into Super Mario World's engine. Which means that I have at least one frame of latency built into my setup itself, which means that RetroArch is indeed responding on the next frame, in at least some instances.Īs I claimed earlier, though: even on my non-CRT setup, I'm able to beat or match original hardware input latency in most cases where run-ahead latency reduction is possible to perform. It'd be possible to get my latency lower, but my monitor has at least 14ms of latency (the price you pay for an IPS panel, apparently), and the Panthera has at least 4ms of input latency. None of them that I could find have three frames of latency, so as you'd expect, the latency varies by one frame. Some of my inputs have two frames of latency. Most of my inputs have one frame of latency-e.g., you'll see the diode cut out on frame 1 when the input happens, nothing will happen on frame 2, then Mario's animation will begin on frame 3. I have an LED diode wired to my button so frames can be counted more accurately, along with a frame counter OSD.Ĭount the frames yourself if you'd like, the video file is in 240fps, and so displays four frames for each individual frame of gameplay-you'll have to hit the "download" button after opening the link to Google Drive. I'm using Vulkan and Snes9x, here, with two frames of run-ahead latency reduction. This is my own setup, on a 34UC89-G and a Razer Panthera, on Windows 10. Did Tyler Loch use a lag tester to do an equipment test on that adapter? There's a reason why someone like Bob from RetroRGB is much more thorough, because he wants to be as sure as possible that his claims are correct, whereas that video doesn't really prove much to me personally. He used a "random Displayport to VGA adapter", so there is almost guaranteed more lag than original hardware and analog signals when compared to something like the MiSTer since you still have some video processing lag involved in that signal chain. There's no test-retest to account for sub-frame variance in lag. The video doesn't compare with original hardware directly, he just says that mario jumps within one frame of lag.

I'll wait for a more proper test demonstration than that video to prove otherwise.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed